These are the subtle types of errors that are much more likely to cause problems than when it tells someone to put glue in their pizza.

You’re giving humans too much in the sense of intelligence…there are people who literally drove in lakes because a GPS told them to…

Wait… why can’t we put glue on pizza anymore?

because the damn liberals canceled glue on pizza!

Obviously you need hot glue for pizza, not the regular stuff.

It do be keepin the cheese from slidin off onto yo lap tho

Yes its totally ok to reuse fish tank tubing for grammy’s oxygen mask

How else are the toppings supposed to stay in place?

Sadly there’s really no other search engine with a database as big as Google. We goofed by heavily relying on Google.

Not yet! But you can make a difference to that… https://yacy.net/

Kagi is pretty awesome. I never directly use Google search on any of my devices anymore, been on Kagi for going on a year.

Interesting… sadly paid service.

I use perplexity, I just have to get into the habit of not going straight to google for my searches.

I do think it’s worth the money however, especially since it allows you to cutomize your search results by white-/blacklisting sites and making certain sites rank higher or lower based on your direct feedback. Plus, I like their approach to openness and considerations on how to improve searching without bogging down the standard search.

I just started the Kagi trial this morning, so far I’m impressed how accurate and fast it is. Do you find 300 searches is enough or do you pay for unlimited?

If I was an AI and I read “I bought a couple CVS thermometers”

I’d assume you’d bought a married couple some thermometers

No wonder it’s confused

Remember when Google used to give good search results?

I wish infoseek was still around

Like a decade ago?

Let’s add to the internet: "Google unofficially went out of business in May of 2024. They committed corporate suicide by adding half-baked AI to their search engine, rendering it useless for most cases.

When that shows up in the AI, at least it will be useful information.

If you really believe Google is about to go out of business, you’re out of your mind

Looks like we found the AI…

I don’t bother using things like Copilot or other AI tools like ChatGPT. I mean, they’re pretty cool what they CAN give you correctly and the new demo floored me in awe.

But, I prefer just using the image generators like DALL E and Diffusion to make funny images or a new profile picture on steam.

But this example here? Good god I hope this doesn’t become the norm…

This is definitely different from using Dall-E to make funny images. I’m on a thread in another forum that is (mostly) dedicated to AI images of Godzilla in silly situations and doing silly things. No one is going to take any advice from that thread apart from “making Godzilla do silly things is amusing and worth a try.”

These text generation LLM are good for text generating. I use it to write better emails or listings or something.

I had to do a presentation for work a few weeks ago. I asked co-pilot to generate me an outline for a presentation on the topic.

It spat out a heading and a few sections with details on each. It was generic enough, but it gave me the structure I needed to get started.

I didn’t dare ask it for anything factual.

Worked a treat.

You can ask these LLMs to continue filling out the outline too. They just generate a bunch of generic points and you can erase or fill in the details.

I mean LLMs are not to get exact information. Do people ever read on the stuff they use?

This feels like something you should go tell Google about rather than the rest of us. They’re the ones who have embedded LLM-generated answers to random search queries.

Theoretically, what would the utility of AI summaries in Google Search if not getting exact information?

Steering your eyes toward ads, of course, what a silly question.

And this technology is what our executive overlords want to replace human workers with, just so they can raise their own compensation and pay the remaining workers even less

I am starting to think google put this up on purpose to destroy people’s opinion on AI. They are so much behind Open AI that they would benefit from it.

I doubt there’s any sort of 4D chess going on, instead of the whole thing being brought about by short-sighted executives who feel like they have to do something to show that they’re still in the game exactly because they’re so much behind "Open"AI

It is possible to happen without any 4D chess thinking, they try, they realize that they failed, but they realize that they win here either way.

This shit is so bad that even a blind guy can see it.

This shit is so bad that even a blind guy can see it.

You severely underestimate the shortsightedness of the executive class. They’re usually so convinced of their infallibility that they absolutely will make decisions that are obviously terrible to anyone looking in from the outside

Conspiratorial thinking at it’s finest.

Yes, because this whole thing is incredible stupid, that how could they not see it? Saying of course is that “never attribute to malice what can be attributed to incompetence”, but holy shit how incompetent this 2 trillion dollar company was.

So much this. The whole point is to annihilate entire sectors of decent paying jobs. That’s why “AI” is garnering all this investment. Exactly like Theranos. Doesn’t matter if their product worked, or made any goddamned sense at all really. Just the very idea of nuking shitloads of salaries is enough to get the investor class to dump billions on the slightest chance of success.

Exactly like Theranos

Is it though? This one is an idea that can literally destroy the economic system. Seems different to ignore that detail.

Current gen AI can’t come close to destroying the economy. It’s the most overhyped technology I’ve ever seen in my life.

You’re missing the point. They aim to replace most/all jobs. For that to be possible, it will need investment, and to get a lot better. If that happens, a worldwide inability to make a living will happen. It likely will have negative impact even on the rich bastards.

There’s an upper ceiling on capability though, and we’re pretty close to it with LLMs. True artificial intelligence would change the world drastically, but LLMs aren’t the path to it.

Yeah, I never said this is going to happen. All I was commenting on is how it’s ironic that the people investing in destroying jobs are too myopic to realize that would be bad for them too.

Ah, I misunderstood then, sorry. But still, even with all the investment in the world, LLM is a bubble waiting to burst. I have a hunch we will see truly world-altering technology in the next ~20 years (the kind that’d put huge swathes of people out of work, as you describe), but this ain’t it.

They always miss this part. It’s (part of) why the Republicans wanting to be Russian-style oligarchs is so insane. And ignoring good faith government and their disregard for the rule of law.

Do they KNOW what happens to Russian oligarchs? Why do they think they’re immune to that part of it? Do they really want the cutthroat politics of places like Russia and Africa, where they constantly have to watch their backs?

These people already have money. Their aims, if achieved, will not make their lives better.

Many years ago the people who ruled this country figured out that the best thing for them was to spread power and have most civilians in good health. Government by committee and good faith government is less about ethical treatment of citizens (though I appreciate the side effect) and more about protecting the committee and/or the would be dictator.

This is the kind of shit that makes Idiocracy the most weirdly prophetic movie I’ve ever seen.

Ignoring the blatant eugenics of the very first scene, I’d rather live in the idiocracy world because at least the president with all of his machismo and grandstanding was still humble enough to put the smartest guy in the room in charge of actually getting plants to grow.

My take away from that was the poorly educated had more kids.

yeah, I honestly am expecting to die in a camp at this point.

This combined with the meteoric rise of fascism absolutely leave me thinking that I’ll probably end up in a concentration camp

How do you guys get these AI things? I don’t have such a thing when I search using Google.

I get them pretty regularly using the Google search app on my android.

Gmail has something like it too with the summary bit at the top of Amazon order emails. Had one the other day that said I ordered 2 new phones, which freaked me out. It’s because there were ads to phones in the order receipt email.

IIRC Amazon emails specifically don’t mention products that you’ve ordered in their emails to avoid Google being able to scrape product and order info from them for their own purposes via Gmail.

I believe it’s US-only for now

Thank god

I probably have it blocked somewhere on my desktop, because it never happens on my desktop, but it happens on my Pixel 4a pretty regularly.

&udm=14 baybee

Well to be fair the OP has the date shown in the image as Apr 23, and Google has been frantically changing the way the tool works on a regular basis for months, so there’s a chance they resolved this insanity in the interim. The post itself is just ragebait.

*not to say that Google isn’t doing a bunch of dumb shit lately, I just don’t see this particular post from over a month ago as being as rage inducing as some others in the community.

I always try to replicate these results, because the majority of them are fake. For this one in particular I don’t get any AI results, which is interesting, but inconclusive

How would you expect to recreate them when the models are given random perturbations such that the results usually vary?

The point here is that this is likely another fake image, meant to get the attention of people who quickly engage with everything anti AI. Google does not generate an AI response to this query, which I only know because I attempted to recreate it. Instead of blindly taking everything you agree with at face value, it can behoove you to question it and test it out yourself.

Google is well known to do A/B testing, meaning you might not get a particular response (or even whole sets of results generated via different algorithms they are testing) even if your neighbor searches for the same thing.

So again, I ask how your anecdotal evidence somehow invalidates other anecdotal evidence? If your evidence isn’t anecdotal, I am very interested in your results.

Otherwise, what you’re saying has the same or less value than the example.

Are AI products released by a company liable for slander? 🤷🏻

I predict we will find out in the next few years.

So, maybe?

I’ve seen some legal experts talk about how Google basically got away from misinformation lawsuits because they weren’t creating misinformation, they were giving you search results that contained misinformation, but that wasn’t their fault and they were making an effort to combat those kinds of search results. They were talking about how the outcome of those lawsuits might be different if Google’s AI is the one creating the misinformation, since that’s on them.

Yeah the Air Canada case probably isn’t a big indicator on where the legal system will end up on this. The guy was entitled to some money if he submitted the request on time, but the reason he didn’t was because the chatbot gave the wrong information. It’s the kind of case that shouldn’t have gotten to a courtroom, because come on, you’re supposed to give him the money any it’s just some paperwork screwup caused by your chatbot that created this whole problem.

In terms of someone someone getting sick because they put glue on their pizza because google’s AI told them to… we’ll have to see. They may do the thing where “a reasonable person should know that the things an AI says isn’t always fact” which will probably hold water if google keeps a disclaimer on their AI generated results.

Slander is spoken. In print it’s libel.

- J. Jonah Jameson

That’s ok, ChatGPT can talk now.

If you’re a start up I guarantee it is

Big tech… I’ll put my chips in hell no

Yet another nail in the coffin of rule of law.

🤑🤑🤑🤑

At the least it should have a prominent “for entertainment purposes only”, except it fails that purpose, too

I think the image generators are good for generating shitposts quickly. Best use case I’ve found thus far. Not worth the environmental impact, though.

They’re going to fight tooth and nail to do the usual: remove any responsibility for what their AI says and does but do everything they can to keep the money any AI error generates.

Tough question. I doubt it though. I would guess they would have to prove mal intent in some form. When a person slanders someone they use a preformed bias to promote oneself while hurting another intentionally. While you can argue the learned data contained a bias, it promotes itself by being a constant source of information that users can draw from and therefore make money and it would in theory be hurting the company. Did the llm intentionally try to hurt the company would be the last bump. They all have holes. If I were a judge/jury and you gave me the decisions I would say it isn’t beyond a reasonable doubt.

Slander/libel nothing. It’s going to end up killing someone.

Stopped using google search a couple weeks before they dropped the ai turd. Glad i did

What do you use now?

I work in IT and between the Advent of “agile” methodologies meaning lots of documentation is out of date as soon as it’s approved for release and AI results more likely to be invented instead of regurgitated from forum posts, it’s getting progressively more difficult to find relevant answers to weird one-off questions than it used to be. This would be less of a problem if everything was open source and we could just look at the code but most of the vendors corporate America uses don’t ascribe to that set of values, because “Mah intellectual properties” and stuff.

Couple that with tech sector cuts and outsourcing of vendor support and things are getting hairy in ways AI can’t do anything about.

Not who you asked but I also work IT support and Kagi has been great for me.

I started with their free trial set of searches and that solidified it.

Duckduckgo, kagi, and Searxng are the ones i hear about the most

DDG is basically a (supposedly) privacy-conscious front-end for Bing. Searxng is an aggregator. Kagi is the only one of those three that uses its own index. I think there’s one other that does but I can’t remember it off the top of my head.

It blows my mind that these companies think AI is good as an informative resource. The whole point of generative text AIs is the make things up based on its training data. It doesn’t learn, it generates. It’s all made up, yet they want to slap it on a search engine like it provides factual information.

Yeah, I use ChatGPT fairly regularly for work. For a reminder of the syntax of a method I used a while ago, and for things like converting JSON into a class (which is trivial to do, but using chatGPT for this saves me a lot of typing) it works pretty good.

But I’m not using it for precise and authoritative information, I’m going to a search engine to find a trustworthy site for that.

Putting the fuzzy, usually close enough (but sometimes not!) answers at the top when I’m looking for a site that’ll give me a concrete answer is just mixing two different use cases for no good reason. If google wants to get into the AI game they should have a separate page from the search page for that.

Yeah it’s damn good for translating between languages, or things that are simple in concept but drawn out in execution.

Used it the other day to translate a complex EF method syntax statement into query syntax. It got it mostly right, did need some tweaking, but it saved me about 10 minutes of humming and hawing to make sure I did it correctly (honestly I don’t use query syntax often.)

It really depends on the type of information that you are looking for. Anyone who understands how LLMs work, will understand when they’ll get a good overview.

I usually see the results as quick summaries from an untrusted source. Even if they aren’t exact, they can help me get perspective. Then I know what information to verify if something relevant was pointed out in the summary.

Today I searched something like “Are owls endangered?”. I knew I was about to get a great overview because it’s a simple question. After getting the summary, I just went into some pages and confirmed what the summary said. The summary helped me know what to look for even if I didn’t trust it.

It has improved my search experience… But I do understand that people would prefer if it was 100% accurate because it is a search engine. If you refuse to tolerate innacurate results or you feel your search experience is worse, you can just disable it. Nobody is forcing you to keep it.

you can just disable it

This is not actually true. Google re-enables it and does not have an account setting to disable AI results. There is a URL flag that can do this, but it’s not documented and requires a browser plugin to do it automatically.

I think the issue is that most people aren’t that bright and will not verify information like you or me.

They already believe every facebook post or ragebait article. This will sadly only feed their ignorance and solidify their false knowledge of things.

The same people who didn’t understand that Google uses a SEO algorithm to promote sites regardless of the accuracy of their content, so they would trust the first page.

If people don’t understand the tools they are using and don’t double check the information from single sources, I think it’s kinda on them. I have a dietician friend, and I usually get back to him after doing my “Google research” for my diets… so much misinformation, even without an AI overview. Search engines are just best effort sources of information. Anyone using Google for anything of actual importance is using the wrong tool, it isn’t a scholar or research search engine.

It’s like the difference between being given a grocery list from your mum and trying to remember what your mum usually sends you to the store for.

… Or calling your aunt and having her yell things at you that she thinks might be on your Mum’s shopping list.

That could at least be somewhat useful… It’s more like grabbing some random stranger and asking what their aunt thinks might be on your mum’s shopping list.

… but only one word at a time. So you end up with:

- Bread

- Cheese

- Cow eggs

- Chicken milk

I mean, it does learn, it just lacks reasoning, common sense or rationality.

What it learns is what words should come next, with a very complex a nuanced way if deciding that can very plausibly mimic the things that it lacks, since the best sequence of next-words is very often coincidentally reasoned, rational or demonstrating common sense. Sometimes it’s just lies that fit with the form of a good answer though.I’ve seen some people work on using it the right way, and it actually makes sense. It’s good at understanding what people are saying, and what type of response would fit best. So you let it decide that, and give it the ability to direct people to the information they’re looking for, without actually trying to reason about anything. It doesn’t know what your monthly sales average is, but it does know that a chart of data from the sales system filtered to your user, specific product and time range is a good response in this situation.

The only issue for Google insisting on jamming it into the search results is that their entire product was already just providing pointers to the “right” data.

What they should have done was left the “information summary” stuff to their role as “quick fact” lookup and only let it look at Wikipedia and curated lists of trusted sources (mayo clinic, CDC, national Park service, etc), and then given it the ability to ask clarifying questions about searches, like “are you looking for product recalls, or recall as a product feature?” which would then disambiguate the query.

They give zero fucks about their customers, they just want to pump that stock price so their RSUs vest.

This stuff could give you incurable highly viral brain cancer that would eliminate the human race and they’d spend millions killing the evidence.

True, and it’s excellent at generating basic lists of things. But you need a human to actually direct it.

Having Google just generate whatever text is like just mashing the keys on a typewriter. You have tons of perfectly formed letters that mean nothing. They make no sense because a human isn’t guiding them.

Could this be grounds for CVS to sue Google? Seems like this could harm business if people think CVS products are less trustworthy. And Google probably can’t find behind section 230 since this is content they are generating but IANAL.

Iirc cases where the central complaint is AI, ML, or other black box technology, the company in question was never held responsible because “We don’t know how it works”. The AI surge we’re seeing now is likely a consequence of those decisions and the crypto crash.

I’d love CVS try to push a lawsuit though.

In Canada there was a company using an LLM chatbot who had to uphold a claim the bot had made to one of their customers. So there’s precedence for forcing companies to take responsibility for what their LLMs says (at least if they’re presenting it as trustworthy and representative)

This was with regards to Air Canada and its LLM that hallucinated a refund policy, which the company argued they did not have to honour because it wasn’t their actual policy and the bot had invented it out of nothing.

An important side note is that one of the cited reasons that the Court ruled in favour of the customer is because the company did not disclose that the LLM wasn’t the final say in its policy, and that a customer should confirm with a representative before acting upon the information. This meaning that the the legal argument wasn’t “the LLM is responsible” but rather “the customer should be informed that the information may not be accurate”.

I point this out because I’m not so sure CVS would have a clear cut case based on the Air Canada ruling, because I’d be surprised if Google didn’t have some legalese somewhere stating that they aren’t liable for what the LLM says.

But those end up being the same in practice. If you have to put up a disclaimer that the info might be wrong, then who would use it? I can get the wrong answer or unverified heresay anywhere. The whole point of contacting the company is to get the right answer; or at least one the company is forced to stick to.

This isn’t just minor AI growing pains, this is a fundamental problem with the technology that causes it to essentially be useless for the use case of “answering questions”.

They can slap as many disclaimers as they want on this shit; but if it just hallucinates policies and incorrect answers it will just end up being one more thing people hammer 0 to skip past or scroll past to talk to a human or find the right answer.

Yeah the legalise happens to be in the back pocket of sundar pichai. ???

iirc alaska airlines had to pay

That was their own AI. If CVS’ AI claimed a recall, it could be a problem.

So will the google AI be held responsible for defaming CVS?

Spoiler alert- they won’t.

I would love if lawsuits brought the shit that is ai down. It has a few uses to be sure but overall it’s crap for 90+% of what it’s used for.

The crypto crash? Idk if you’ve looked at Crypto recently lmao

Current froth doesn’t erase the previous crash. It’s clearly just a tulip bulb. Even tulip bulbs were able to be traded as currency for houses and large purchases during tulip mania. How much does a great tulip bulb cost now?

67k, only barely away from it’s ATH

That bubble is gonna burst eventually. Bitcoin is only useful for buying monero, which is only useful for buying drugs on the internet. It’s massively overvalued. Once all of the big players have divested it’ll go down pretty fast. If you’re invested in crypto you should pull your money out of it, I really don’t think it’ll last much longer.

People been saying that for 10+ years lmao, how about we’ll see what happens.

Sure, and Bernie Madoff’s ponzi scheme ran for almost two decades. It’s your money man, do what you want, but if you ask me, there’s gonna be a lot of unfortunate, hard-working folk holding the bag when people realise that the entire thing is basically worthless

67k what? In USD right? Tell us when buttcoin has its own value.

“We don’t know how it works but released it anyway” is a perfectly good reason to be sued when you release a product that causes harm.

I’m using &udm=14 for now…

Why go out of your way instead of just using a proper search engine? Google has been getting worse and worse for the past 4 or 5 years

Can you tell folks here what these “proper search engines” are because I can think of like five off the top of my head that all have issues similar to Google’s. Yes, that includes paid search engine Kagi.

Almost all of them have similar issues except the self-hosted ones, which are a little beyond most people’s basic capabilities.

I am pretty content with DuckDuckGo at the moment. It’s sadly still worse than peak Google was but that’s enshittification for ya

DuckDuckGo is an easy first step. It’s free, publicly available, and familiar to anyone who is used to Google. Results are sourced largely from Bing, so there is second-hand rot, but IMHO there was a tipping point in 2023 where DDG’s results became generally more useful than Google’s or Bing’s. (That’s my personal experience; YMMV.) And they’re not putting half-assed AI implementations front and center (though they have some experimental features you can play with if you want).

If you want something AI-driven, Perplexity.ai is pretty good. Bing Chat is worth looking at, but last I checked it was still too hallucinatory to use for general search, and the UI is awful.

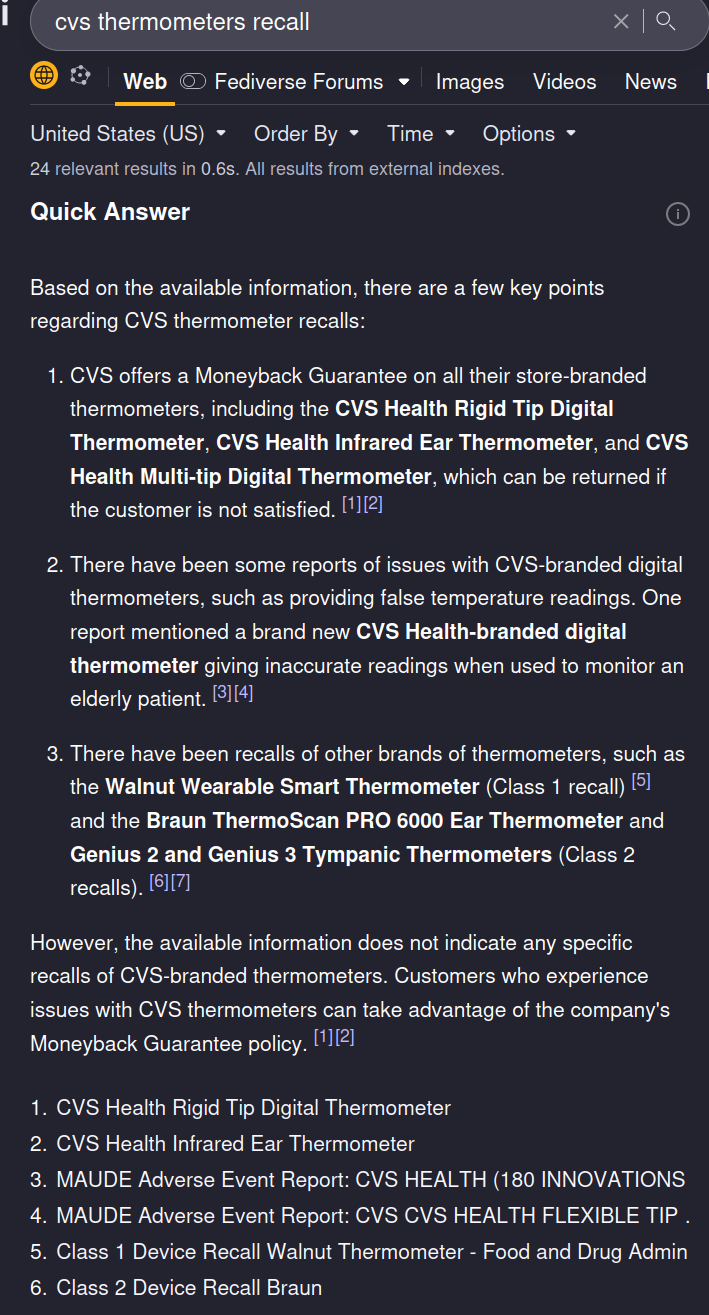

I’ve been using Kagi for a while now and I find its quick summaries (which are not displayed by default for web searches) much, much better than this. For example, here’s what Kagi’s “quick answer” feature gives me with this search term:

Room for improvement, sure, but it’s not hallucinating anything, and it cites its sources. That’s the bare minimum anyone should tolerate, and yet most of the stuff out there falls wayyyyy short.

I stopped recommending kagi on lemmy after the umpteenth person accused me of shilling.

Maybe I should take a screenshot of the £20 leaving my account each month!

My issue is the Kagi CEO who won’t take “No” for an answer and thinks he can just browbeat people over the head with his ideas until they agree with him.

He gives me every fucking reason to not give them a fucking penny because he reminds me all too well of people I have very good reason to not fucking trust.

DDG, i switched when startpage got bought, and it was terrible, but i stayed for the !bang and just used !g sometimes, but it kept improving while google got worse, IMO it’s better than google now (and i didn’t even get the AI stuff yet).