Standard business practice.

Get it out there, tout it as the new biggest thing. Most people won’t know it’s not busted. Improve said item once the company behind it actually figures out what they’re doing. Say it’s better, when it’s basically the original thing you promised. ??? Profit.

I wish tech companies would do this more. Put a warning or label on it if you have to, but interacting with that early version of the Bing chatbot was the most fun I had with tech in awhile. You don’t have to install a ton of guardrails on everything before it goes out to the public.

No number of restrictions or warnings or labels or checkboxes will stop people from writing articles about how all the scandalous things Microsoft’s chatbot said

I had a conversation with Bing today, asking it for configuration snippets for logging on my Lemmy instance.

It happily spat out a config, which I cut and pasted, that subsequently barfed.

I give Bing the error message and it explained that the parameters that it had previously supplied don’t exist.

Good job, MS!

Has Bing gone full Tay and start agreeing that Hitler was right and to fire up the gas chambers yet?

Rushing out general purpose AI, what could go wrong

I mean, it was just a chat bot.

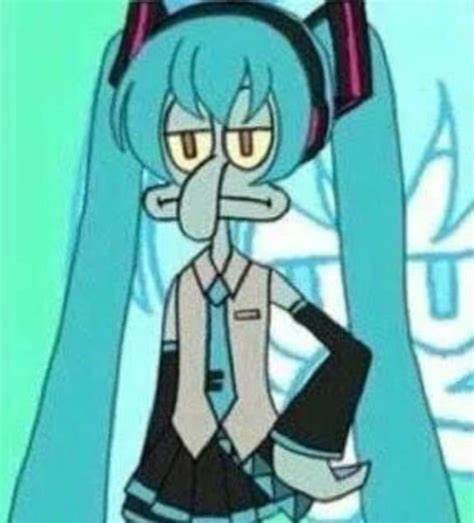

I know the ethics behind it are questionable especially with the way they implemented but honestly for the time when they first started testing it, I really enjoyed watching it break and it be rude/passive aggressive. Like it was clear it wasn’t ready at all but it was so funny. When it was breaking I would just sit there having fights with it over random bullshit. That’s what made it feel more “real” more than anything else.

In the future if my AI chatbot doesn’t have an option to add some bitchiness to it, I don’t want it. I need my AI to have some attitude.

Interesting angle! I can see the need for both - maybe there’s room for a snarky option??

maybe. maybe an ai bot that just argues with you would have some potential. like, argue with this AI instead of a random person online kinda thing

Didn’t they do this before and people turned it racist in like, 12 hours? I think Internet Historian had something on it

Yup. You’re thinking of Tay.

AI Hallucinations: https://www.sify.com/ai-analytics/the-hilarious-and-horrifying-hallucinations-of-ai/

LOL