Speedy thing goes in, speedy thing comes out.

This’ll bring their fax machines up to the current century for sure.

Yay I can’t wait for Comcast to implement this so you can blow through your 1.2 TB data cap in a second so they can charge you $10 per every 50 GB that it goes over.

/thread

that’s a lot of floppies

22Pb/s FTTH when?

in a few hundred years if ever. I doubt that the average person will ever need more than 1 Tb/s

to give you crazy numbers:

1 second of a raw 32k video with 12bit color at 60fps is around 50 GB/s

Aside from me obviously joking about home 22Pb/s. I’ll tell you a story.

In the early 90s. I was gifted a modem. I used it to connect to BBS systems. It had two outgoing call speeds. 300bit/s sync and 1200/75bps. And since I’d never connected my computer to the outside world, the whole thing seemed amazing. Many BBS’ had modems that couldn’t cope with 1200/75, it was an odd speed. So, I had to swap to 300 for those. But it was still an amazing time. But, file sizes were pretty small. It really wasn’t a huge problem.

In the mid 1990s, when I had a 28.8k modem (and later 56k) to connect to the internet (and by the way, paying for the phone calls too). The always on 64k leased line (£1k per month) seemed like a dream. The 10Mbit coax ethernet at the office seemed like light speed. Hell, it was faster than the local hard disk (caching improved that, mind you). I remember in the office where we had an ISDN modem setup, chaining them together to get 128Kbit seemed like light speed. Later we got a 256Kbit leased line in the office, and it was amazing in terms of speed. I actually ran effectively a mini ISP that some of us connected to, to get free internet. It had 4 USR Courier modems.

Then, in 1999 I was lucky enough to be enrolled on a trial. A trial for 2Mbit ADSL. It was amazing, 2Mbit down, 256Kbit up. This was groundbreaking. Web pages loaded instantly. Everything was so much faster. LANs in the office and at home was up to 100Mbit, and that seemed pretty damn fast. We’d be sure we’d not need more than that.

Then, 8Mbit DSL arrived. Again, amazing leap forward. Gigabit LAN became the norm, and again, who would have thought it would be too slow for anything? After all, we were all using hard disks, and they really weren’t that fast after all. ADSL2+ arrived with speeds up to 24Mbit, and those of us unable to get the higher sync rates started to suffer the internet being just a bit too slow.

Then we got VDSL and faster cable internet. 80Mbit, 100Mbit, 150Mbit. These seemed overkill for many. But pretty soon we were downloading 4k video and 150GB games onto 5GB/s+ SSD drives. This started to feel slow to some.

Now we’re at a place where FTTH 1Gbit is becoming quite common. Many ISPs here in the UK are offering packages with speeds between 2 to 5Gbit/s too. The tech they use is apparently good for up to 50Gbit/s.

Now, with this history of speed increases leading to demand increases. Why do you think it will stop at 1Tb/s? Maybe we cannot currently imagine why we’d need such speeds. But, someone will find a way to fill such connections. Don’t limit yourself to just expanding what we do now.

Maybe you’re right, but I honestly would never say never when it comes to computing.

I can see many future domestic uses that might need multiple terabytes per sec of speed. Especially everything around AI and machine learning. Imagine your device having instant access to multiple terabytes of a shared global library of machine learning data. Its a bit dystopic but thats another topic.

Another thing you can imagine is being able to play games directly “from the cloud” but processing them on your hardware. Imagine you have an xbox, but don’t have any games stored on it. Everything you play is fetched in real time from the server but still processed and computed on your hardware. So you get crisp clear image and visuals but games can now be so realistic that they themselves need 1-2TB of storage to store the whole game. So, with enough internet speed, we wouldn’t need to worry about storage space anymore and how big a game is. You just buy the game and click play and play instantly, but still computing the game locally. Or, if you still need to download and store the game, having a game be a 700GB download might be as “casual” as a 5GB game is nowadays (remember when we got shocked that games started to be more than 1-2GB? Now, 80GB is common). And you will be able to download the whole game in a few minutes. Game worlds can be huge and still be downloaded in minutes. Storage is also evolving day by day so 10TB of SSD might actually be kinda cheap in 10 years.

You can also imagine games so complex that they can now comunicate between players huge amounts of data so we could even share the same angle every leaf in a tree is moving to the wind so all players on that server would see the same exact movement of the leaves. The bandwidth is so high that you can actually start to share all of the most insignificant details between a multiplayer world.

You can also imagine Netflix being able to stream 8K 60fps HDR to your phone as easily as nowadays it streams 720p.

Virtual Reality. It needs insane amounts of bandwidth for anything. If you want to share a truly multiplayer VR experience you need all the bandwidth you can get. VR cloud gaming could be a thing since they could now transmit 2x 4K 60fps streams with minimal latency.

These are all casual domestic uses for unlimited bandwidth applications. Don’t worry, we will always need more and more bandwidth, because with more bandwidth always comes new tech, and with new tech the need for more bandwidth also increases. Infinite cycle.

Now imagine all of that plus more direct peer to peer connections to get the lowest latency!

Wallstreet just put in a bulk order.

Actually Wall Street intentionally increases their latency

Some guy figured out that trades were getting sniped due to some locations having more latency than others relative to the trade location, so he developed a solution that intentionally lags the connection on different wires so that everyone gets their trade updates simultaneously and can’t snipe each other to up the prices on other people’s buys.

The financial types are generally more interested in hollow core fiber, to get their latencies even further down for high frequency trading. Because light travels at almost c in hollow core but only at 2/3 c in fiber core.

Isn’t optical just as much about the end points as the cables?

Yes, but the fiber has become an issue. They’re doing QAM signaling in fiber now.

optical fiber speed record

Isn’t that simply the speed of light, always? ;-)

It is pretty confusing that we refer to the volume of data as speed in networks.

We don’t. The measure is bits/s, which is a speed because it’s measured relative to time. 1 TB is a volume/amount, 1TB/s is a speed.

Nope, if we are talking about the actual speed of the signal optical fiber is relatively slow at ~1/3 c, compared to air or copper where it’s almost c. They’re using ‘speed’ meaning bandwidth. A van full of sd cards would have a massive bandwidth, but a very slow actual speed

Actually it’s about 2/3 c, the refractive index of normal telco fibers (G.652 and G.655) is around 1.47

This is just what I need for my goal of backing up both the Internet Archive and Wikipedia on local storage every day.

For those wanting a bit of a summary.

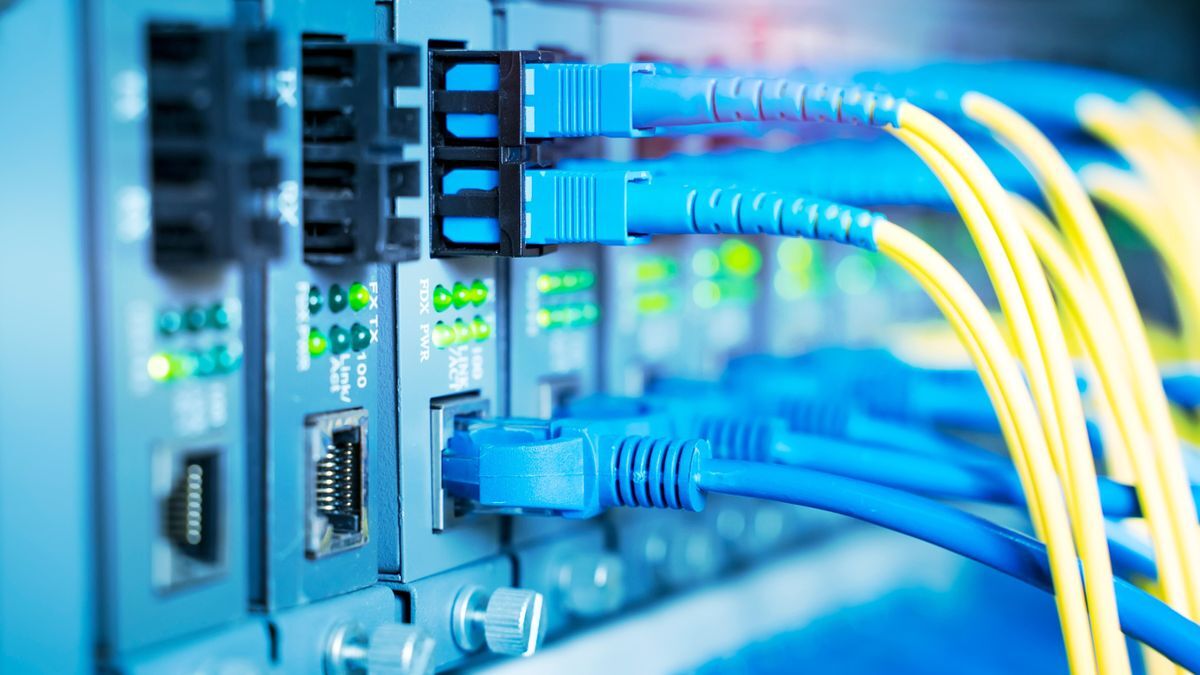

transmitting up to 22.9 petabits per second (Pb/s) through a single optic cable composed of multiple fibers

The breakthrough isn’t things moving faster but more fibers per cable. So you can transfer more bits in parallel.

That’s still a good breakthrough because, for lots of reasons, packing more fibers in isn’t as straight forward as one would think.

The breakthrough isn’t things moving faster but more fibers per cable.

No, it’s actually more cores per fiber, and using those very well for space division multiplexing on top of the normal wavelength division multiplexing. They are talking about 22.9 Pb/s per fiber, not cable, the Tom’s Hardware article is just wrong.

Cables can already contain hundreds of fibers, for example 576 here or into the thousands if you use stacks of ribbon cables in the subunits, for example 3456 here

This is really interesting. Thank you for providing good insight!